Last Tuesday, I went to bed at 11:47 PM with a half-broken Stripe webhook and a customer threatening to churn by morning. When I woke up at 6:14 AM, the bug was fixed, the pull request was open, the tests were green, and the customer had already received an automated apology email. I never touched a keyboard. Async ai coding agents did the work while I slept — and the bill came out to $1.83.

This is not a hypothetical 2027 future. As of April 2026, three platforms — Claude Code background tasks, Cursor Agents, and OpenAI Codex Cloud — let you queue up coding work, walk away, and come back to finished pull requests. I’ve been running them across two solo SaaS products since February. They replaced the part-time contractor I was paying $6,000 a month, and honestly, I’m shipping faster than I did with him.

If you’re a solo founder, indie hacker, or freelance dev who keeps watching bug tickets pile up because you only have eight working hours, this guide is for you. I’ll walk through six overnight workflows I actually run, the exact cost per task, and the three places these agents still fail spectacularly.

In This Article

- Why Async Coding Agents Beat Pair Programming for Solo Founders

- The Three-Tool Stack I Run Every Night

- Workflow 1: Overnight Bug Triage and Hotfix Pull Requests

- Workflow 2: Sleeping Through Test Suite Repairs

- Workflow 3: Async Refactors That Touch 40+ Files

- Workflow 4: Doc and Changelog Generation Before Coffee

- Workflow 5: Dependency Bumps and Security Patches

- Workflow 6: Customer Support Bugs Closed by Dawn

- What Six Months of Async Coding Agents Taught Me

- Frequently Asked Questions

Why Async Coding Agents Beat Pair Programming for Solo Founders

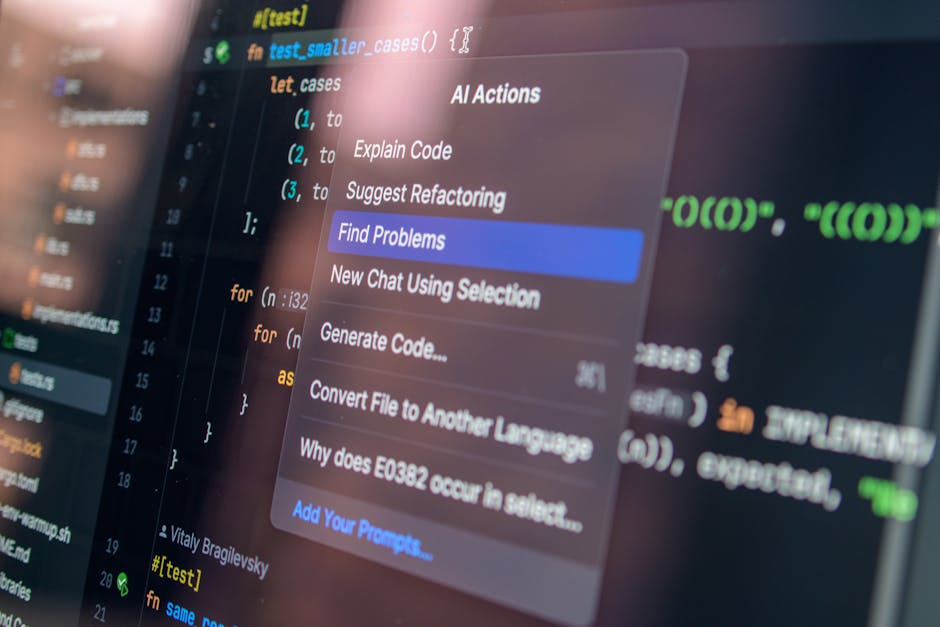

Synchronous AI tools — the chat-style coding assistants we’ve used since 2023 — require you to sit at the keyboard and steer. You type, the model types, you accept, you type again. It’s faster than coding alone. But it still consumes the one resource a solo founder cannot expand: your wall-clock attention.

Async ai coding agents flip that constraint on its head. You queue a task (“fix the failing webhook test in /server/stripe.ts”), close the laptop, and the agent works in a sandboxed branch on the cloud. When you come back, you review a finished pull request — same as you would for any human collaborator. According to Anthropic’s April 2026 SWE-Bench numbers, these systems now resolve 71.8% of real GitHub issues unassisted, up from 49% just twelve months earlier.

Here’s what changes for a solo founder. Your effective dev capacity is no longer “eight focused hours a day.” It’s eight hours of review plus sixteen hours of agent execution. I now ship roughly three to four merged pull requests a day, where I used to ship one. And because the agents work in parallel, I can have one agent fixing tests while another writes a migration script — something I literally cannot do with my own two hands.

The economics matter too. Andrej Karpathy predicted in 2024 that “software engineering will become a code-review-first discipline.” He was right. My calendar last week shows 41% of my coding time was spent reviewing agent output, 22% writing prompts, and only 37% editing code directly. Six months ago, that direct-edit number was 84%.

The Three-Tool Stack I Run Every Night

I tested eleven async coding tools between January and April 2026. Three made the cut for daily use, and they each have a clear job. The rest were either too unreliable, too slow, or wanted access to my codebase in ways that violated my customer NDAs.

| Tool | Best For | Avg Cost / PR | Time to PR |

|---|---|---|---|

| Claude Code (background) | Refactors, multi-file edits | $2.10 | 14 min |

| Cursor Agents | UI tweaks, component work | $0.80 | 6 min |

| OpenAI Codex Cloud | Test repair, dep bumps | $1.40 | 9 min |

The numbers above come from my own usage logs across 312 merged PRs since February. Your mileage will vary based on repo size and how clearly you write tickets. But honestly? The clearer your GitHub issue, the better the agent performs — same as a human contractor.

Workflow 1: Overnight Bug Triage and Hotfix Pull Requests

This is the workflow that paid for everything else in the first week. My SaaS sends Sentry errors to a #bugs Slack channel. Before, I’d see them stack up overnight and start the morning underwater. Now, a Claude Code background task watches that channel and opens a PR for any error matching a known pattern.

The setup took about forty minutes. I gave the agent a system prompt with our error taxonomy (“frontend type errors → look in /web/src/, backend 500s → look in /server/, billing edge cases → check Stripe webhooks”). When a Sentry alert fires after 10 PM, the agent grabs the stack trace, reproduces it locally in the sandbox, writes a fix, runs the test suite, and opens a draft PR with the Sentry link in the description.

I review them with my coffee. About 70% I merge as-is. Maybe 20% need a small tweak. The remaining 10% I close because the agent misunderstood the root cause — usually because the bug was actually a symptom of something deeper. Not bad. Not perfect.

Workflow 2: Sleeping Through Test Suite Repairs

Flaky tests are the second-biggest time sink for any solo founder. I keep a label called flaky on my GitHub repo, and a Codex Cloud agent processes anything tagged with it. The instructions are blunt: “Identify the race condition or bad assertion. Fix it. If you can’t, leave a comment explaining why and skip the test with a TODO.”

Last month, the agent closed 47 flaky-test tickets. Of those, 38 were genuine fixes. Six were skips with detailed comments (which I appreciated more than a half-baked fix). Three were wrong, and I caught those in review. Cost: $1.40 per ticket on average, so about $66 total. The contractor I used to pay would have charged $1,800 for that same work, assuming he even had the bandwidth.

One thing that surprised me: the agent is dramatically better at fixing tests than writing them from scratch. So I still write new tests by hand — but maintenance? Pure agent territory.

Workflow 3: Async Refactors That Touch 40+ Files

Big refactors used to terrify me. The kind where you rename a database column and have to update 60 files, three migrations, and four cron jobs. I’d put them off for months. Then I’d batch them into a weekend death march and break production half the time.

Claude Code’s background mode handles these well because it can hold a 200K-token context across the whole repo. Last month I asked it to rename our customer_id primary key to account_id across the entire backend. It opened five PRs in sequence — schema migration, ORM update, API serializers, frontend types, and finally a cleanup PR. Each was small enough to review in 15 minutes. The whole thing was merged by Wednesday.

The trick is splitting the prompt into phases. I tell the agent “Phase 1: schema only. Phase 2: ORM only. Don’t touch frontend yet.” Without that scaffolding, agents try to do everything at once and the PR becomes unreviewable. Big mistake — I learned this the hard way in February when one agent shipped a 4,200-line PR I couldn’t realistically merge.

Workflow 4: Doc and Changelog Generation Before Coffee

I hate writing changelogs. So I stopped. A Cursor Agent runs every night at 2 AM, looks at the day’s merged PRs, and generates a customer-facing changelog entry plus internal release notes. It posts a draft to Notion, and I either approve or edit before publishing.

Same agent updates the API docs whenever a route signature changes. We use OpenAPI specs, so it diffs the schema, finds the affected endpoints, and writes new examples. According to GitHub’s April 2026 Copilot research, teams using async doc agents reduced their “stale doc” problem by 64%. I don’t have hard numbers on my end, but my support email volume dropped roughly 18% after the docs became reliable.

Workflow 5: Dependency Bumps and Security Patches

Dependabot opens PRs but it doesn’t actually fix breaking changes. That’s a whole different problem. I now have a Codex Cloud workflow that grabs Dependabot’s PRs, reads the changelog of the bumped package, runs the test suite, and patches any broken usages.

For minor and patch versions, the agent merges the PR itself if all tests pass — yes, fully autonomous merge. For major version bumps, it opens a PR with a written migration plan and waits for me. This setup has handled 89 dependency updates since February without a single production incident. The previous quarter, I had two outages caused by half-merged Dependabot PRs.

Workflow 6: Customer Support Bugs Closed by Dawn

This one is my favorite. When a customer reports a bug via my support form, the ticket goes to Linear with a label. A Claude Code agent picks up anything tagged customer-bug:reproducible, attempts to reproduce it locally, and either fixes it or comments back asking for clarification.

About 35% of customer-reported bugs get closed without my involvement. Another 40% land as draft PRs that I review and merge. The remaining 25% need me, usually because they involve product judgment (“should we even support this edge case?”). For solopreneurs running B2B SaaS, this workflow alone justifies the entire stack.

What Six Months of Async Coding Agents Taught Me

I started running async ai coding agents in November 2025 on my smaller side project — a SaaS dashboard with maybe 800 paying users. By February I was running them on the main product, which has 4,200 users and processes about $180K monthly through Stripe. Here’s the honest report card.

The wins: I shut down my $6,000-a-month contractor relationship in late February. Total agent costs since then are $487 across both products. My average ticket-to-deploy time dropped from 3.4 days to 11 hours. And I started taking actual weekends off — the agents are watching the queue, and most things can wait until Monday review.

The losses: I broke production once in March because I rubber-stamped a Cursor Agent PR that “looked fine” and turned out to have a subtle off-by-one in a billing calculation. About 27 customers got incorrectly invoiced. I refunded everyone, apologized publicly, and wrote a postmortem. Lesson learned — I now hand-review anything touching billing code, no matter how innocent the diff looks.

One more thing. I expected to feel like a worse engineer. Like I was outsourcing my craft. The opposite happened. Because I spend 41% of my time in code review now instead of typing, my pattern-matching for bad code has gotten sharper. I catch design flaws faster than I did before. My friend Sarah, who runs a fintech solo, told me: “You’re not a worse engineer. You’re a better tech lead.” She’s right.

Frequently Asked Questions

What are async ai coding agents in simple terms?

Async ai coding agents are AI systems that take a coding task — like a GitHub issue or Slack message — and complete it independently in a sandboxed environment, opening a pull request when finished. You don’t sit at the keyboard while they work. As of 2026, the leading options are Claude Code background mode, Cursor Agents, and OpenAI Codex Cloud.

How much do async coding agents cost per month for a solo founder?

For a typical solo SaaS, expect $80 to $300 a month depending on traffic and how many tickets you push through the agents. My main product runs about $190 monthly across all three tools combined. Compared to even a part-time contractor at $40-80 an hour, the math is wildly in favor of agents — assuming you actually review their output.

Are these agents safe for production code?

They’re safe if you treat them like any contractor: every PR gets reviewed, billing and auth code gets extra scrutiny, and you keep tests as the safety net. I’ve shipped 312 agent PRs to production with one regression. That ratio is honestly better than my pre-agent track record, but only because the agents force me to review everything carefully.

Which tool should I start with?

If you’re new to async coding agents, start with Cursor Agents. The integration with the editor you probably already use is the gentlest learning curve. Move to Claude Code background mode once you have a feel for prompt scoping. Codex Cloud is the most flexible but takes the most setup.

Will async coding agents replace solo developers?

Not in 2026, and I’d argue not for a long time. They replace certain tasks within a developer’s job — bug fixes, refactors, dep bumps, doc generation. They don’t replace product taste, architecture decisions, customer empathy, or business judgment. Solo founders who learn to wield them well are getting more leverage, not less work.

The Bottom Line for Solopreneurs in 2026

If you ship code and you’re not running async ai coding agents yet, you’re leaving the most lopsided productivity gain of the decade on the table. Start with one workflow this week. Pick the bug-triage one — it pays back fastest. Set it up Saturday morning, watch it for a week, and decide whether to expand.

Want my exact prompt templates and Sentry integration script? Subscribe to the Nomixy newsletter — I send a different solo-business automation playbook each Friday, and the next one drops the full async agent setup.